Unlock the value

of data on your terms

Organisations can connect with others securely and trustfully to share, process, and analyse data on their terms with data sovereignty protection

Get started

Introducing the newest addition to our data space portfolio for Catena-X

Connect to Catena-X and beyond...

Introducing the newest addition to our data space portfolio for Catena-X

For our customers, only the best is sufficient – therefore we cooperate with Bosch Connected Industry, the software house for Industry 4.0 from Bosch. From now on, you can choose the Digital Twin Registry powered by Bosch, which is proven robust for millions of Digital Twins, integrated into our product. This new solution is the easiest way for you to raise the value of your data for new use cases across the product lifecycle.Seamlessly integrated, this cutting-edge database offers stand-out efficiency and reliability in creating and managing digital twins across the supply chain.

Unlock the power of real-time insights, enhanced collaboration, and streamlined operations. Elevate your digital transformation journey with our Data Space solutions, including the Digital Twin Registry, powered by Bosch Semantic Stack!

Testimonials

Start monetizing now - unlocking the value of data

Our state-of-the-art services empower organizations to meet the imminent legal and sustainability challenges with trustful and sovereign end-to-end secure solutions for your data journey.

Create data chains

Sustainability

Connect with your suppliers to trace emissions throughout the manufacturing and supply chain and take data-driven actions to minimize your environmental impact for a greener future.

Circular Economy

Reuse, recycle and remanufacture - Be the circular economy leader in your industry. Utilize Telekom’s strong partnerships across all levels of your value chains to create insightful data chains for responsible industrialization.

Traceability

We empower manufacturers and suppliers to implement traceability throughout their value chain. To support manufacturers become compliant with legal regulations, our solutions provide from production through to recycling.

Modal travel shift

Enable trust between mobility providers to share data to unlock higher efficiency and utilization of mobility resources, to gain a triple win outcome – “everyone’s a winner”: consumers (better travel), providers (more business), and cities (cleaner, safer, less noise).

Your journey to Getting Connected

1. Unlock the value of your data

Easily connect all of your data sources. We handle the complexity, you reap the benefits.

2. Get connected to any dataspace

We give you the power to choose and connect to any dataspace you want.

3. Share data on your terms

Decide who can see and use your data, keep the power to control your rights to data.

4. Have your data safely delivered

Secure End-to-End protection, keeping your data safe throughout the whole transaction and beyond.

1. Unlock the value of your data

Easily connect all of your data sources. We handle the complexity, you reap the benefits.

2. Get connected to any dataspace

We give you the power to choose and connect to any dataspace you want.

3. Share data on your terms

Decide who can see and use your data, keep the power to control your rights to data.

4. Have your data safely delivered

Secure End-to-End protection, keeping your data safe throughout the whole transaction and beyond.

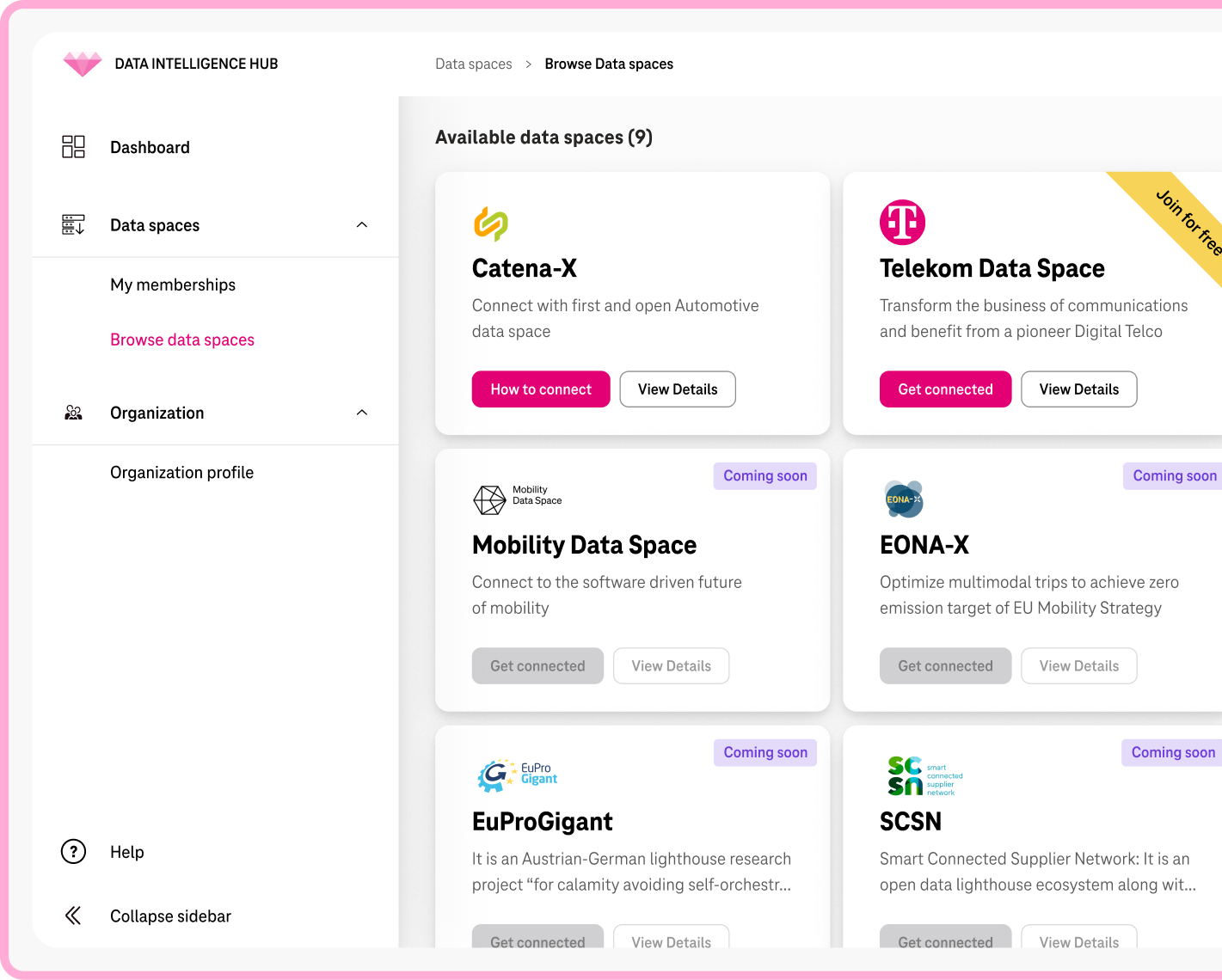

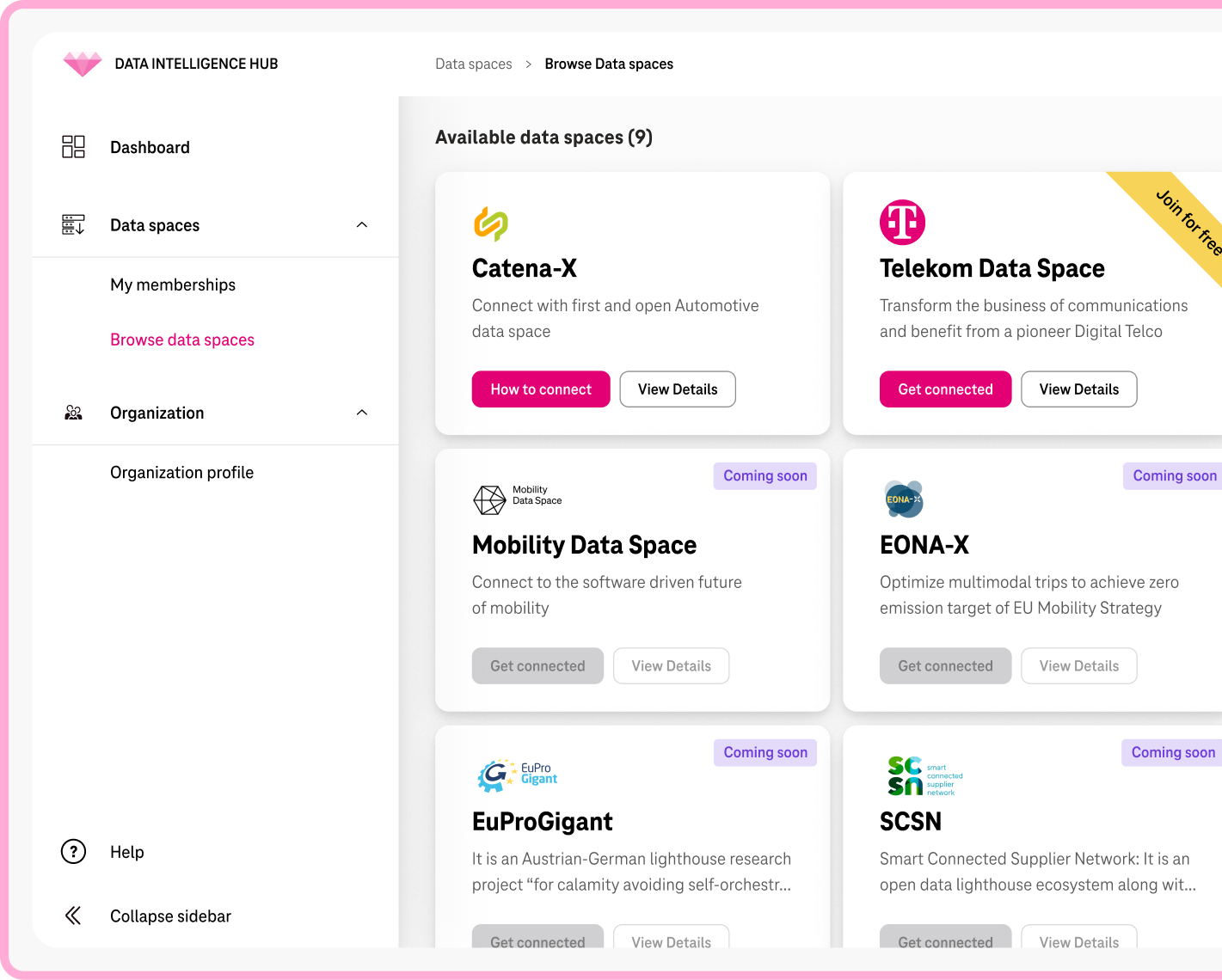

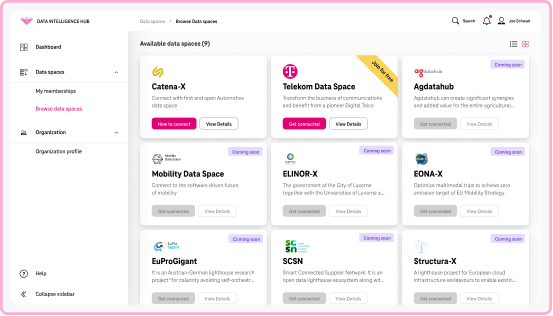

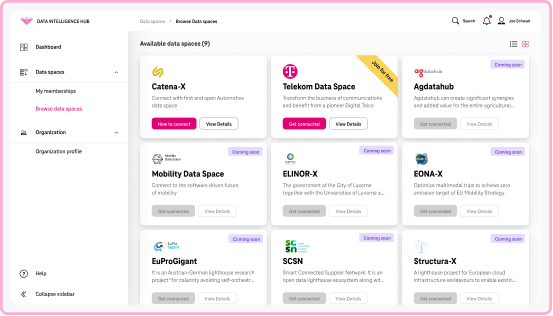

Explore dataspaces

The Telekom Data Intelligence Hub advantage

Trustful

Deutsche Telekom’s independent and secure global network for our services and solutions to make sure you can count on us when it comes to sharing data.

Intuitive and User-Friendly

Customer centricity and customer experience is at the core of our services. We strive for plug-and-play experience, easy access, and quick onboarding to delight our customers’ data transformation journey.

Pioneers

Founding partner of Gaia-X, on the board of the IDSA, and shaping technology as well as business adoption as an active participant in the three leading dataspaces in automotive, Mobilithek/ Mobility Data Space, Gaia-X 4 Future Mobility, and Catena-X.

Seamless Integration

End-to-end business solutions from backend integration to standardized semantics, for efficient restructuring, process optimization and utilization of your data with minimal efforts. Break up data silos across departments and companies.